Tech

The Mechanics of Authenticity: Understanding How an AI Detector Functions

The landscape of digital creation has shifted fundamentally with the rapid progression of artificial intelligence. Today, AI-driven suites for writing, imagery, and video have made high-speed production available to everyone. However, this surge in synthetic content has created a parallel necessity: the ability to verify what is human and what is machine-made. In this climate, the ai detector has emerged as a vital instrument for maintaining transparency and digital trust.

An ai detector is a sophisticated diagnostic system engineered to evaluate the origins of digital media. Whether it is an educator reviewing a semester paper or a publisher confirming the uniqueness of a feature story, these tools are now central to preserving the integrity of modern communication.

What Defines an AI Detector?

At its core, an ai detector is a digital analyst. It investigates content to determine its likely source by scrutinizing linguistic fingerprints, structural patterns, and stylistic rhythms.

While advanced large language models like ChatGPT produce remarkably polished text, they often leave behind specific statistical markers. These include overly “perfect” grammar, highly symmetrical paragraph lengths, and a lack of the quirky unpredictability found in human thought. An ai detector identifies these traits using complex machine learning algorithms and statistical modeling.

Modern systems, such as the CudekAI AI Detector, take this further by offering sentence-level precision and extensive multilingual support. Instead of looking only at surface-level word counts, these advanced platforms evaluate the semantic “DNA” of a text—looking at contextual flow and structural probability—to provide a reliable verdict on its origin.

The Inner Workings of AI Detection Technology

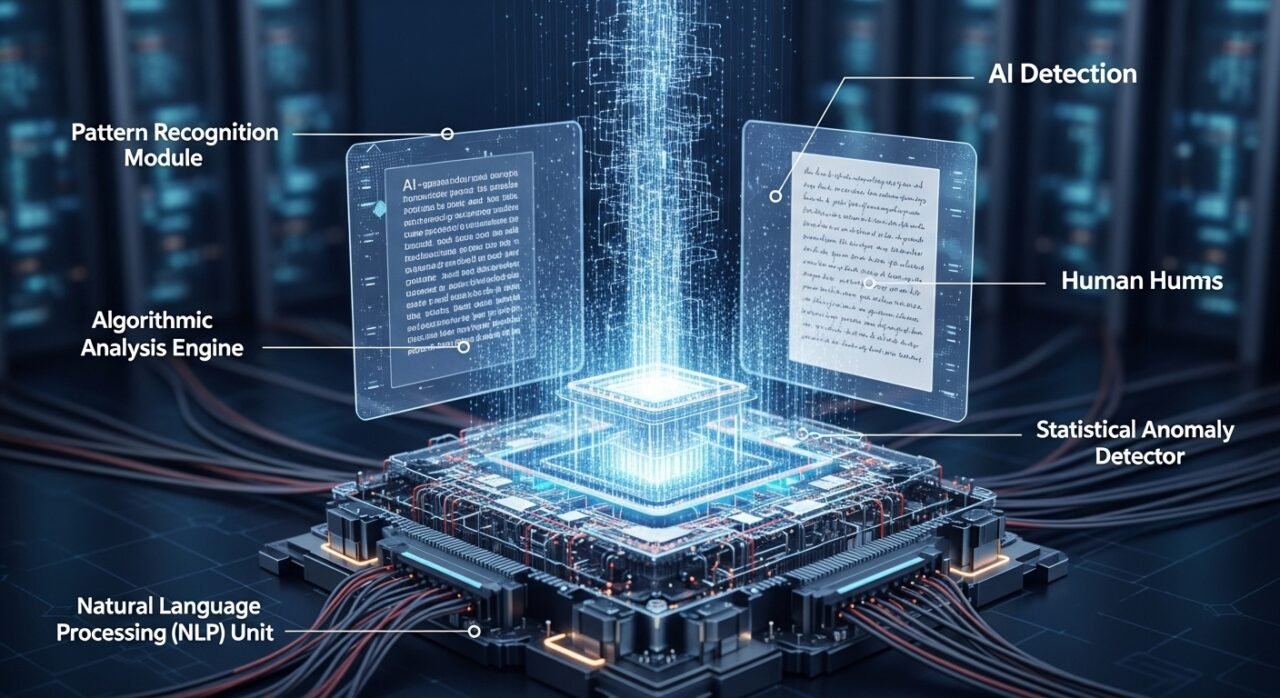

The operation of an ai detector typically involves a multi-stage process rooted in Natural Language Processing (NLP) and deep learning:

- Data Ingestion: The system receives the content, which could range from an academic essay to a corporate email or a marketing blog post.

- Pattern Recognition: The tool scans for “machine-like” indicators. It measures word frequency, syntax consistency, and perplexity (the randomness of the text). Human writing usually has high perplexity, while AI content is statistically predictable.

- Probability Scoring: After the scan, the system generates a percentage score. This reflects the likelihood that the material was generated by an algorithm.

- Detailed Interpretation: Leading tools highlight specific passages, allowing users to see exactly which parts of the text triggered the AI flag.

This process is fueled by training models on massive datasets containing both human-written prose and millions of samples from AI generators, allowing the detector to spot evolving machine patterns with high accuracy.

The Growing Necessity of the AI Detector

As AI becomes capable of drafting technical manuals and creative copy in seconds, the “human element” has become a premium asset. An ai detector is now critical in several key areas:

- Academic Honesty: Educators use these tools to ensure student work represents genuine learning rather than a prompt-engineered shortcut.

- Editorial Credibility: For publishers and brand managers, original content is the foundation of audience trust. Verifying a draft before publication prevents the loss of authority.

- Combatting Misinformation: Synthetic content can be weaponized to spread false narratives. Detection systems act as a first line of defense in identifying automated propaganda.

- Regulatory Compliance: As global laws begin to require the disclosure of AI-generated content, an ai detector helps organizations stay transparent and legally compliant.

The Tech Stack Behind Detection

Modern detection platforms rely on a sophisticated blend of technologies:

- Natural Language Processing (NLP): For analyzing tone, context, and the subtle “feel” of the writing.

- Deep Learning: Continuous training on new AI versions (like GPT-5 or Claude 4) to stay ahead of generative trends.

- Stylometric Analysis: Studying the unique “breath” of a writer’s style—something machines often fail to mimic convincingly.

Advanced platforms like CudekAI integrate these into a scalable, multilingual interface. By supporting over 100 languages, they remove linguistic barriers and ensure that detection is accurate for users across the globe.

Advantages of Integration

Incorporating an ai detector into a professional workflow offers several measurable benefits:

- Precision: It identifies subtle machine markers that the human eye might miss.

- Speed: Long-form documents that would take hours to manually vet are analyzed in seconds.

- SEO Integrity: Search engines prioritize original content. Using a detector helps marketers avoid “thin content” penalties.

- Brand Safety: It ensures that every piece of outward-facing communication aligns with the brand’s authentic voice.

Generation vs. Detection: A Balanced Ecosystem

It is a misconception to view AI generation and an ai detector as enemies. In reality, they are two sides of the same coin. Generation drives innovation and efficiency, while detection provides the necessary accountability. This balance ensures that technology empowers creators without eroding the value of human creativity.

As these tools evolve, they are moving beyond simple text. We are seeing the rise of detectors capable of verifying the authenticity of audio, video, and deepfake imagery, creating a comprehensive safety net for the digital age.

The Future of Detection

The role of the ai detector will only expand as generative models become more integrated into daily life. Future trends likely include:

- Real-time detection embedded directly into Content Management Systems (CMS).

- Enhanced “watermark” recognition for AI-generated media.

- Better cross-language analysis to prevent translation-based AI bypasses.

Conclusion

The ai detector represents a fundamental shift in how we verify digital reality. By analyzing structural signals and linguistic patterns, these systems safeguard originality and public trust. In a world increasingly shaped by algorithms, the ability to identify the true source of content is the ultimate protection for genuine human creativity and ethical communication.